Talking better product launch and allocation decisions with Ferrero USA

The global confectioner mitigates waste, improves service levels and controls costs by connecting digital supply chain visibility with POS analytics.

Keep readingAs consumers start returning to stores again, will in-store promotions have the same impact as before? What will we use to replace sampling? And how will we know if new marketing tactics are effective?

With ongoing change, cohort analysis is more important than ever for consumer brands to evaluate the impact of marketing investments and quickly adjust accordingly. You can’t rely on what worked before, so measurement is critical. But you also can’t plan to compare performance against what happened last year, or even last week, as you seek to maximize the ROI of your revised marketing budgets.

Fortunately, our co-founders hail from the pioneers in Test and Learn and A/B testing. They are experienced in balancing statistical sophistication with “quick and dirty” results to provide practical guidance. This post will focus on how Marketing leaders can effectively answer two critical questions to shape marketing plans and optimize spend through 2020.

No matter what any Media Mix Modeling vendor will tell you, the old adage remains true: “Half the money I spend on advertising is wasted; the trouble is I don’t know which half.” Traditional advertising measurement relies on regression models to correlate marketing activities against sales performance, but of course, correlation doesn’t always equal causation!

As tempting as it is to correlate an effect (sales) with what happened directly before it (your genius marketing initiative), this trap is how so many great brands spend money on initiatives that may or may not generate any incremental revenue. If we take a cartoon example: Just because sales of ice cream go up during the summer, doesn’t mean that it’s the beautiful summer-themed end-caps at the grocery stores that caused those sales to rise. Sure, those end-caps might have had an impact, but they can’t take all the credit for consumers wanting a tasty treat when it’s hot outside—that would artificially pump up the ROI of those shopper marketing dollars and lead you to the wrong decision.

The most important part of making your next marketing decision is to isolate exactly how much sales lift those end-caps gave you, versus how much the hot weather gave you. The same goes for all those pricing changes you ran last year, all the TV ads, the circulars, the SEM…

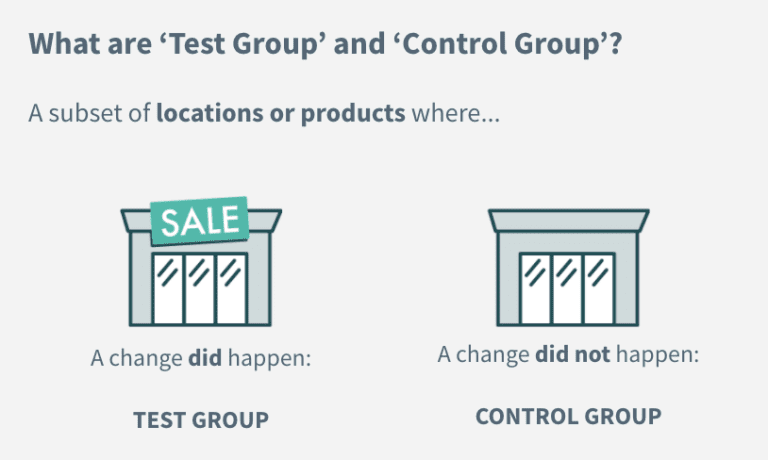

Thankfully, the Scientific Method has given us a great tool to attack this problem. Among its many advantages, most powerful is its ability to isolate the specific impact of treatments (in our case, marketing activities). The downside is the difficulty in finding suitable Control Groups to compare to Test Groups.

Online channels have largely cracked this challenge through large-scale A/B testing and digital tracking of which users were exposed to which marketing variations (banner ads, landing pages, etc.). However, both split testing and tracking are a lot tricker in the offline world. A store either has a display or not, an expensive out-of-home ad is either put up or not; you can’t have a display or ad that’s only shown to every other person. Furthermore, these activities will only affect groups of consumers in certain areas, but for the vast majority you won’t have intent-data, cookies, or clear purchase funnels to identify them when they make a purchase.

In this case, the Scientific Method would suggest that the best way to measure these marketing investments is to measure the demand from consumers in the geographies affected by those activities (Test Group), versus the demand from consumers in geographies without them but as similar as possible in every other way (Control Group), over the same time period to account for factors like seasonality, year-over-year growth, and week-to-week changes in consumer demand.

There may still be some noise, like from shoppers who didn’t see the marketing and made a purchase, but the approach and conclusions are correct.

Accurately measuring demand from consumers is key in this exercise. For brands, that means combining both Point of Sale (POS) data from stores in the area, regardless of retailer, with ecommerce sales data for orders shipped to consumers in those areas. Layering all this information together not only gives you a rich set of Test versus Control data to isolate the overall impact of your spend, but can also help you understand how demand changes across retailers and channels.

So step-by-step, here are the best practices for Marketers to measure the impact of your pricing, promotion, advertising, and merchandising efforts using the Scientific Method:

“What’s the point of all that work?” Great question!

One way to think about the learnings is as experiments conducted to better plan for the coming months. After all, if you had true confidence in the ROI of every marketing campaign, making decisions for the coming year would be much simpler: you’d eliminate the underperforming ones, double down on campaigns that worked, and use the saved budget and effort to try out new ideas.

Let’s say, for instance, you used this framework to discover that the BOGO promotions you ran last Spring drove a higher incremental margin outcome than the 50% off promotion you ran the following fall. Furthermore, you discovered that the stores with extra end-cap fixtures designed to feature the BOGO promotion didn’t see any additional margin lift compared against stores without the end-cap fixtures. Then the outcome is pretty clear: Let’s forget about the 50% off promotions and let’s not spend the extra money on the end-caps.

A lot has changed recently, but you still have to start somewhere in developing your plans for the rest of 2020. Focusing on what has worked in the past is at least a good place to start.

Now as you move forward, it would be ideal to discover if a program is working while it is still running, whether it’s a strategy you’ve used before or a new one you’re trying out. In the case that it’s not driving measurable impact, you can quickly make a decision to cut it and redirect those dollars to optimize your marketing spend. Conversely, if performance is strong, you can maximize what may be a limited window of opportunity, especially given how consumer behavior and our world seem to be changing week-to-week, if not day-to-day.

Say you started running a social media campaign in a couple counties as they were getting ready to reopen. It put your brand top of mind and drove incremental sales lift in the following weeks compared to other states in the same situation, delivering a positive ROI. If you knew that now, you could prepare similar campaigns to roll out in additional counties as they get ready to reopen too. The timely insight enables you to immediately make use of the learnings and expand the campaign while it still makes sense to do so.

To enable this type of quick action, the steps you should take to measure performance that were outlined above don’t change, just the speed with which you need to do them. By the time you gather and manipulate the data, conduct the analysis and report on it, it could be too late to take advantage of any insights right away—unless you have a purpose-built retail analytics tool, like Alloy.

Alloy automatically extracts and integrates POS and online sales data from all your channels and provides flexible dashboards to review and analyze it in real-time. Our newest feature, Experiments, guides users through the workflow of creating Test and Control groups, so anyone can conduct cohort analysis and use the clear, easy-to-understand results to guide your next decision. It’s included as part of our standard offering and is just another way Alloy is helping our customers maximize the value of your data and navigate COVID-19.

The global confectioner mitigates waste, improves service levels and controls costs by connecting digital supply chain visibility with POS analytics.

Keep readingHow to take an iterative approach to digital supply chain transformation with real-time alerts that motivate teams to collaborate on issue resolution

Keep readingUnderstand how gaps between systems, teams and processes are keeping you constantly firefighting and hurting your supply chain resilience

Keep reading